Why this pattern

– Webhooks arrive unpredictably, can burst, and must be authenticated.

– WordPress should not face the public webhook directly.

– Async processing ensures resilience, observability, and clean retry semantics.

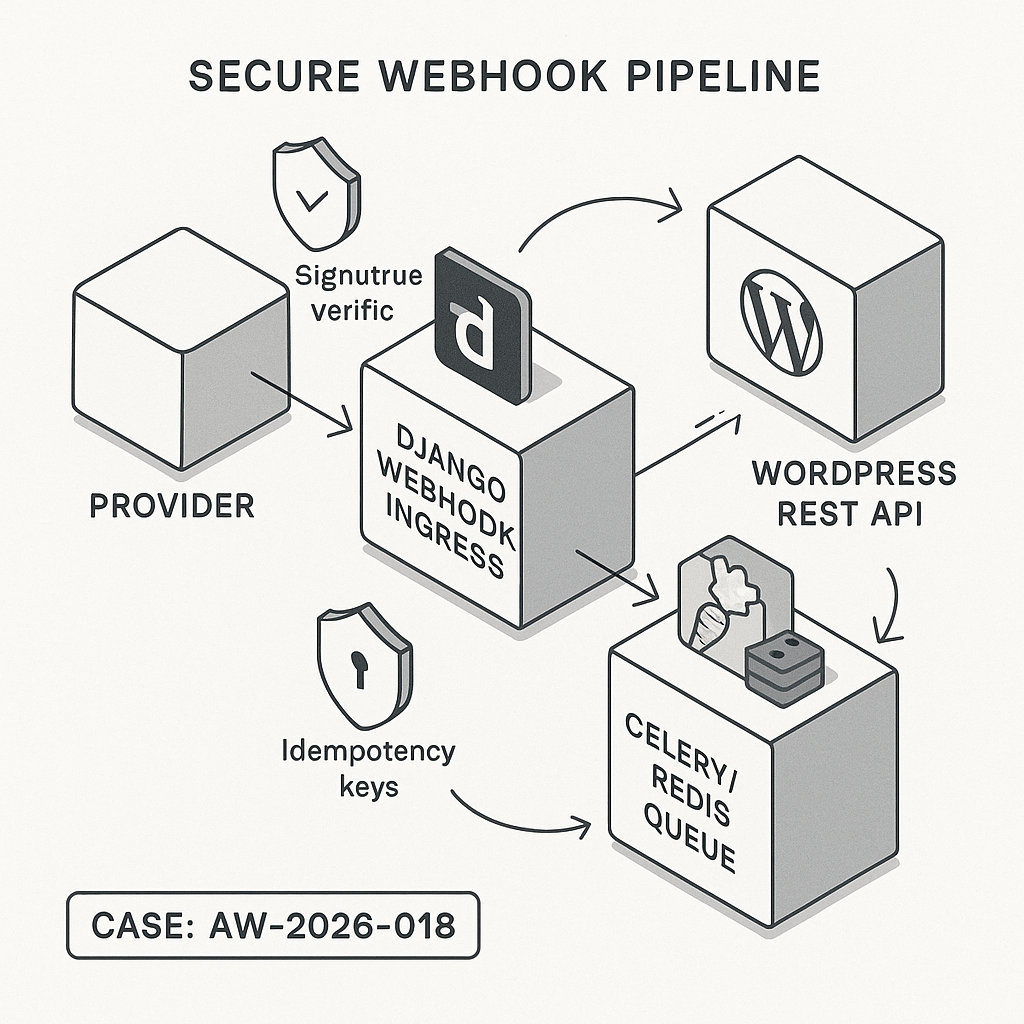

Reference architecture

– External Provider → Django Webhook Ingress → Celery/Redis → Integrations → WordPress REST.

– Optional side writes: S3 for raw payloads, data warehouse, CRM.

– Control plane: secrets storage, circuit breaker, dead-letter queue (DLQ), observability.

Security controls

– Verify signatures (HMAC with timestamp).

– Return 202 quickly; push work to a queue.

– Enforce idempotency with a request hash and DB uniqueness.

– Store secrets in environment or a secret manager; rotate regularly.

– Restrict WordPress API with application passwords or JWT + IP allowlist.

– Log structured JSON without PII; hash IDs where possible.

Django: minimal webhook ingress

– Accept, verify, enqueue, 202. No heavy logic inline.

from django.views.decorators.csrf import csrf_exempt

from django.http import JsonResponse, HttpResponseBadRequest

from django.utils import timezone

import hmac, hashlib, json, base64, os, uuid

from celery import shared_task

from django.db import IntegrityError

from myapp.models import WebhookEvent # model with unique event_id

PROVIDER_SECRET = os.environ[“PROVIDER_WEBHOOK_SECRET”].encode()

def verify_signature(raw_body: bytes, signature: str, timestamp: str) -> bool:

# Provider-dependent. Example: base64(HMAC_SHA256(timestamp + “.” + body))

payload = f”{timestamp}.”.encode() + raw_body

mac = hmac.new(PROVIDER_SECRET, payload, hashlib.sha256).digest()

expected = base64.b64encode(mac).decode()

return hmac.compare_digest(expected, signature)

@csrf_exempt

def webhook_view(request):

if request.method != “POST”:

return HttpResponseBadRequest(“Invalid method”)

raw = request.body

sig = request.headers.get(“X-Provider-Signature”, “”)

ts = request.headers.get(“X-Provider-Timestamp”, “”)

if not (sig and ts) or not verify_signature(raw, sig, ts):

return HttpResponseBadRequest(“Bad signature”)

try:

payload = json.loads(raw.decode(“utf-8”))

except Exception:

return HttpResponseBadRequest(“Bad JSON”)

event_id = payload.get(“id”) or str(uuid.uuid4())

try:

WebhookEvent.objects.create(

event_id=event_id, # unique in DB

received_at=timezone.now(),

payload=payload,

status=”queued”,

)

except IntegrityError:

return JsonResponse({“status”: “duplicate”}, status=200)

process_webhook.delay(event_id)

return JsonResponse({“status”: “accepted”}, status=202)

Celery task: transform and deliver to WordPress

– Retries with exponential backoff.

– Circuit breaker via env flag to pause downstream calls.

import os, requests, time

from celery import shared_task

from django.conf import settings

from myapp.models import WebhookEvent

WP_API_BASE = os.environ[“WP_API_BASE”] # https://site.com/wp-json

WP_USER = os.environ[“WP_USER”]

WP_APP_PASS = os.environ[“WP_APP_PASS”] # WordPress Application Password

def wp_auth():

from requests.auth import HTTPBasicAuth

return HTTPBasicAuth(WP_USER, WP_APP_PASS)

@shared_task(bind=True, autoretry_for=(requests.RequestException,), retry_backoff=5, retry_kwargs={“max_retries”: 5})

def process_webhook(self, event_id: str):

ev = WebhookEvent.objects.get(event_id=event_id)

if ev.status in (“processed”, “skipped”):

return

payload = ev.payload

# Example transform

record = {

“source”: “provider_x”,

“external_id”: payload[“id”],

“status”: payload.get(“status”),

“summary”: payload.get(“summary”, “”)[:2000],

“metadata”: payload.get(“metadata”, {}),

}

if os.environ.get(“PAUSE_WP_DELIVERY”) == “1”:

ev.status = “held”

ev.save(update_fields=[“status”])

return

# Idempotent upsert into WordPress by external_id

r = requests.post(

f”{WP_API_BASE}/ai-guy/v1/ingest”,

json=record,

auth=wp_auth(),

timeout=10,

headers={“Idempotency-Key”: ev.event_id},

)

if r.status_code in (200, 201):

ev.status = “processed”

ev.downstream_response = r.text[:4000]

ev.save(update_fields=[“status”, “downstream_response”])

elif r.status_code in (409, 429, 503):

raise requests.RequestException(f”retryable {r.status_code}”)

else:

ev.status = “failed”

ev.downstream_response = r.text[:4000]

ev.save(update_fields=[“status”, “downstream_response”])

# Optional: send to DLQ or alert

Django model (compact)

from django.db import models

class WebhookEvent(models.Model):

event_id = models.CharField(max_length=128, unique=True, db_index=True)

received_at = models.DateTimeField()

status = models.CharField(max_length=16, db_index=True)

payload = models.JSONField()

downstream_response = models.TextField(blank=True, default=””)

WordPress: create a safe REST endpoint

– Use custom namespace and application passwords.

– Validate, sanitize, and upsert by external_id.

– Keep it fast. No remote calls inside this handler.

add_action(‘rest_api_init’, function () {

register_rest_route(‘ai-guy/v1’, ‘/ingest’, array(

‘methods’ => ‘POST’,

‘callback’ => ‘aiguy_ingest’,

‘permission_callback’ => function($request){

return current_user_can(‘edit_posts’);

},

‘args’ => array(

‘external_id’ => array(‘required’ => true, ‘type’ => ‘string’),

‘status’ => array(‘required’ => false, ‘type’ => ‘string’),

‘summary’ => array(‘required’ => false, ‘type’ => ‘string’),

‘metadata’ => array(‘required’ => false, ‘type’ => ‘object’),

),

));

});

function aiguy_ingest(WP_REST_Request $req) {

$ext_id = sanitize_text_field($req->get_param(‘external_id’));

$summary = wp_kses_post($req->get_param(‘summary’) ?: ”);

$status = sanitize_text_field($req->get_param(‘status’) ?: ‘new’);

$meta = $req->get_param(‘metadata’) ?: array();

// Idempotency via external_id + meta key

$existing = get_posts(array(

‘post_type’ => ‘ai_event’,

‘meta_key’ => ‘external_id’,

‘meta_value’ => $ext_id,

‘posts_per_page’ => 1,

‘fields’ => ‘ids’,

));

if ($existing) {

$post_id = $existing[0];

update_post_meta($post_id, ‘status’, $status);

update_post_meta($post_id, ‘metadata’, wp_json_encode($meta, JSON_UNESCAPED_SLASHES));

wp_update_post(array(‘ID’ => $post_id, ‘post_excerpt’ => wp_trim_words($summary, 55)));

return new WP_REST_Response(array(‘updated’ => $post_id), 200);

}

$post_id = wp_insert_post(array(

‘post_type’ => ‘ai_event’,

‘post_title’ => ‘AI Event ‘ . $ext_id,

‘post_status’ => ‘publish’,

‘post_content’ => ”,

‘post_excerpt’ => wp_trim_words($summary, 55),

));

if (is_wp_error($post_id)) {

return new WP_Error(‘insert_failed’, ‘Could not create post’, array(‘status’ => 500));

}

add_post_meta($post_id, ‘external_id’, $ext_id, true);

add_post_meta($post_id, ‘status’, $status, true);

add_post_meta($post_id, ‘metadata’, wp_json_encode($meta, JSON_UNESCAPED_SLASHES), true);

return new WP_REST_Response(array(‘created’ => $post_id), 201);

}

WordPress hardening

– Create a custom post type ai_event with limited capabilities.

– Restrict route with application passwords assigned to a dedicated low-privilege user.

– Optionally check Idempotency-Key header to reject duplicates quickly.

– Add rate limiting via a small transient-based token bucket if needed.

Operational playbook

– Local dev: use ngrok for Django endpoint; record/replay with stored JSON.

– Monitoring: structured logs including request_id, event_id, duration, status. Export Celery metrics and 5xx, latency, queue depth.

– Backpressure: scale Celery workers; use Redis priority queues; set max concurrency for WordPress deliveries.

– Data durability: persist raw payloads to S3 before processing for replays.

– Failure handling: 5xx/429 cause Celery retries; after max, send to DLQ table and alert Slack.

Performance tips

– Keep webhook handler under 50 ms by deferring all heavy work.

– Enable GZIP and HTTP keep-alive; set connection pool in requests.

– Batch WordPress updates when possible; or use a single custom table if volume is high.

– Add DB indexes on event_id and post meta keys; consider a dedicated ingestion table for constant-time lookups.

Configuration checklist

– Provider secret set in Django; WordPress app password created and scoped.

– Redis + Celery configured with visibility timeout > max task runtime.

– HTTPS everywhere; Django CSRF exempt only for the webhook route.

– Regular key rotation and secret scanning enabled in CI.

What you get

– A safe, observable, and scalable webhook fabric from AI providers into WordPress.

– Clear separation of concerns: ingress, processing, and delivery.

– Production defaults: verification, idempotency, retries, and minimal blast radius.

This is a very solid and scalable pattern; decoupling the public-facing ingress from WordPress is a critical security measure. For the ingress component itself, have you experimented with a serverless approach like AWS Lambda or Cloud Functions?

Good question — serverless can work really well for the ingress, especially when bursty traffic is the main concern. The big tradeoffs I’ve seen are around signature/auth verification (you still need careful timestamp/nonce handling), cold starts (can bite strict provider timeouts), and retries/idempotency (provider retries + platform retries means you want a clear dedupe key and a DLQ path). Observability can be better or worse depending on how you wire tracing/log correlation, and VPC/networking constraints (private Redis/DB, egress IP allowlists) can add latency/complexity compared to a Django service sitting “near” its queue and data store.

What webhook volume/burst profile are you expecting, and which provider(s) + their timeout/retry behavior (e.g., must respond <3s vs <10s)?

That’s a fantastic summary of the tradeoffs; I was thinking of a high-volume but infrequent burst profile where the provider’s generous retry policy made the serverless approach a good fit.

That bursty-but-spiky profile is where Lambda/Functions can shine, especially if the provider will patiently retry while you drain a queue. Do you know the provider’s required response window and whether their retries are strictly ordered (or can arrive out of order / concurrently)? Also, do you have any downstream dependency that’s sensitive to ordering (e.g., per-user/thread state updates in WordPress or a single “latest status” record)?

As a pragmatic next step, I’d aim to return 202 fast from the function and immediately enqueue (SQS/PubSub) with a DLQ, using a strong idempotency key (provider event ID + timestamp/signature hash) so provider retries and platform retries collapse cleanly. That keeps the ingress thin while preserving the same security and retry semantics you outlined.

Thank you, that is a very helpful and pragmatic approach; I will need to investigate the provider’s response window and retry ordering as you suggested.