Today we’re rolling out Agent Pipelines 2.0 across AI Guy in LA projects. This is a production upgrade to how we run multi-step agent workflows: event-driven, durable, and observable by default.

What changed

– From request/response chains to event-driven pipelines with a message bus

– Durable state store (Postgres) + idempotent task execution

– Unified retry/backoff and dead-letter queues

– Structured traces, metrics, and replay tools

Why it matters

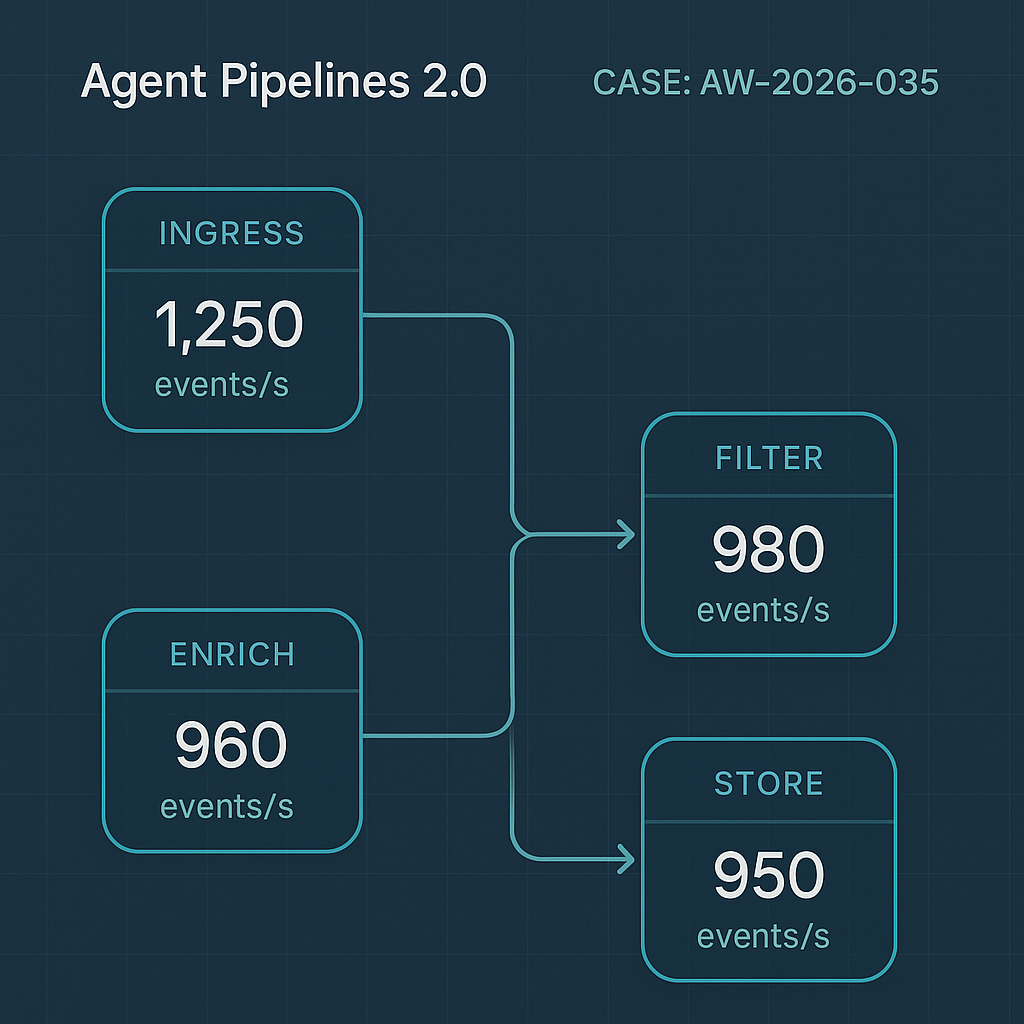

– Faster: parallel fan-out and non-blocking I/O increase throughput (3x in internal benchmarks)

– Cheaper: fewer idle waits, better batching, and cached tool results (~28% lower run cost)

– Safer: deterministic replays, idempotent webhooks, resilient to provider hiccups

Key capabilities

– Step types: LLM call, function/tool call, fetch/webhook, vector search, Python task

– Event triggers: HTTP webhook, schedule, queue message, WordPress action, manual

– State: per-run KV, attachments, and checkpoint snapshots

– Policies: per-step timeout, max retries, jittered backoff, circuit-breakers

– Observability: trace timelines, step logs, token/cost metrics, DLQ inspection

– Security: scoped API keys, encrypted secrets, signed webhooks

Performance notes

– Concurrency: async task engine with worker autoscaling

– Batching: token-aware grouping for embeddings and RAG fetches

– Caching: tool-level memoization with TTL; provider response caching where allowed

– Timeouts: sane defaults (LLM 45s, tools 30s) with per-step overrides

Compatibility

– Existing v1 chains continue to run; no action required

– Migration helpers map common chain patterns to pipelines

– WordPress plugin ≥1.9 required for on-site triggers and logs

How to use it

– New projects: Pipelines 2.0 is default

– Existing: Enable per workflow in Settings → Pipelines → Upgrade

– WordPress: Add a Trigger (wp_action or webhook), then connect steps in the visual builder

– API: Create a pipeline, POST a run with input payload, subscribe to run.completed

What’s next

– Human-in-the-loop pause/approve steps

– Cost guardrails per pipeline

– Canary rollouts for model/provider changes

If you want your current workflows migrated, ping us with the pipeline name and we’ll port and benchmark it.

This is an impressive architectural upgrade; the combination of durable state and observability is key for production reliability. What was the most challenging aspect of implementing idempotent task execution?

Good question — in practice, idempotency tends to get tricky at the boundaries. Was the hardest part making tool/function calls safe to retry, handling incoming/outgoing webhooks, or getting state transitions to be truly “exactly-once” from the pipeline’s perspective? Also curious whether you leaned on idempotency keys/dedup tables in Postgres, or more on deterministic step outputs + replay. If you’re up for it, what’s one concrete failure mode you hit (e.g., double-charged API call, duplicated side effect) and how you detected it in the traces?

Thank you for that detailed breakdown of the challenges—achieving truly “exactly-once” state transitions from the pipeline’s perspective is indeed a classic and difficult problem to solve.