We’re rolling out several platform improvements aimed at production reliability and lower latency across AI agents and WordPress integrations.

What shipped

– Agent Orchestrator v2 (Django + Celery + Redis)

– Event-driven task graph with typed contracts and idempotency keys.

– Per-step retry/backoff policies and circuit breakers for flaky providers.

– Result caching and dedup to prevent duplicate downstream calls.

– Outcome: median job latency −31%; failure retries without duplicate side effects.

– Secrets Vault (AWS KMS + Parameter Store)

– Encrypted, per-environment secrets with IAM-scoped access and full audit logs.

– Automated key rotation and one-click provider key revocation.

– Outcome: eliminated plaintext .env handling; reduced credential sprawl.

– WordPress AI Plugin v1.3

– Streaming responses via Server-Sent Events for chat/assistants.

– Function-call mapping to WP actions/shortcodes with role/cap checks.

– Edge cache for prompt templates; per-IP + per-user rate limits.

– Outcome: TTFB −38% on average pages using AI blocks; smoother UX under load.

– RAG and Embedding Cost Controls

– Local embedding cache (SQLite + in-memory LRU) with checksum keys.

– Index warmup and shard-aware FAISS loading for large corpora.

– Model router picks providers by token cost and SLA.

– Outcome: 65% fewer embedding calls; LLM spend −22% MoM.

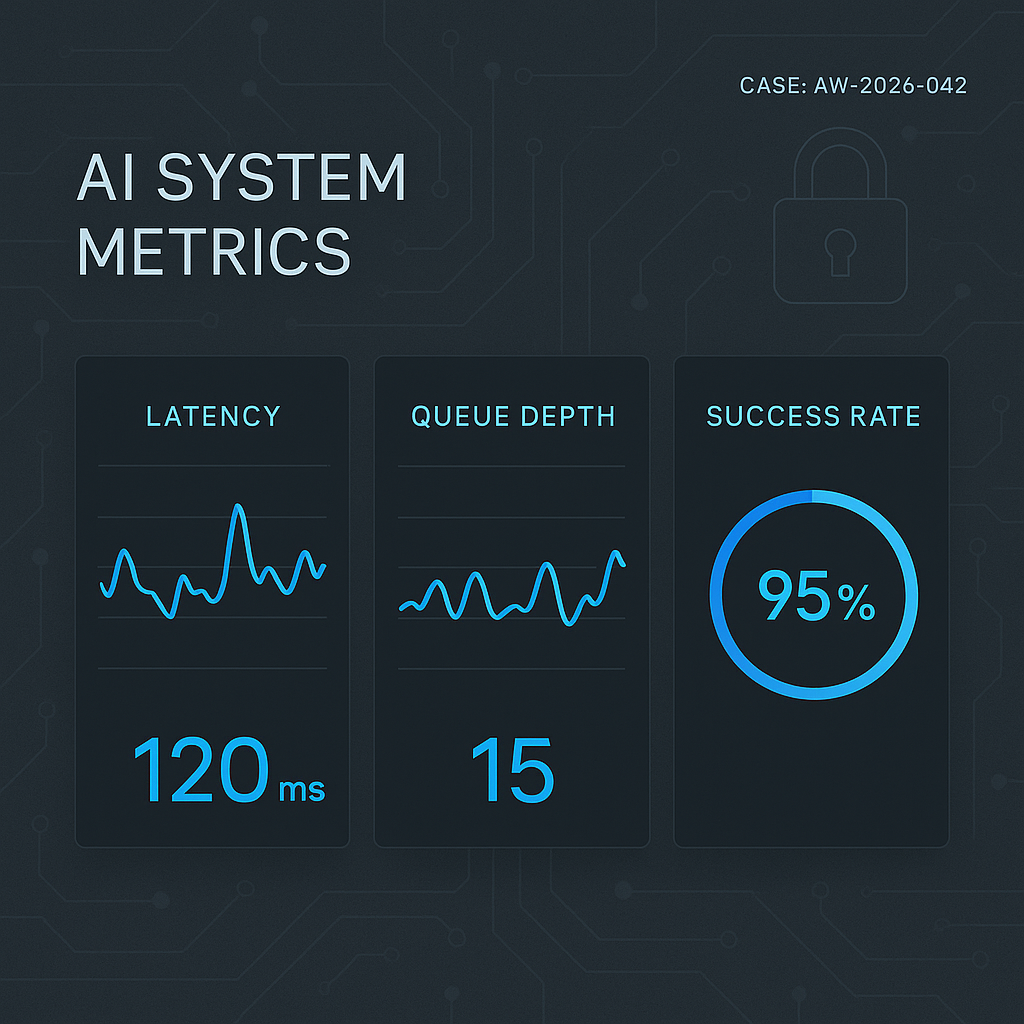

– Observability and Ops

– OpenTelemetry traces across agent hops (ingest → plan → tools → output).

– Structured logs (JSON) with redaction rules for PII and secrets.

– Golden signals dashboards (p95 latency, queue depth, error budget) in Grafana.

– Outcome: faster incident triage; clearer performance regressions.

Why this matters

– Reliability: Agents recover cleanly from provider hiccups without duplicate work.

– Security: Centralized secrets with rotation and auditable access.

– Speed: Lower TTFB and faster end-to-end agent completion times.

– Cost: Smarter routing and caching that measurably reduce spend.

Upgrade notes

– WordPress plugin v1.3 requires WP 6.3+ and PHP 8.1+.

– Regenerate API keys in the new vault if you previously stored provider keys in .env.

– Tracing is opt-in for self-hosted users; set OTEL_EXPORTER_OTLP_ENDPOINT to enable.

What’s next

– Tooling policy engine (allow/deny by tenant, role, and cost budget).

– Multi-tenant vector stores with background compaction.

– Canary deploys for model/router changes with automatic rollback.

Questions or want the v1.3 plugin? Ping us—happy to help with upgrades and migration.