Why you need a proxy

– Keeps vendor keys off WordPress.

– Centralizes auth, rate limits, caching, and retries.

– Enables streaming and uniform observability across sites.

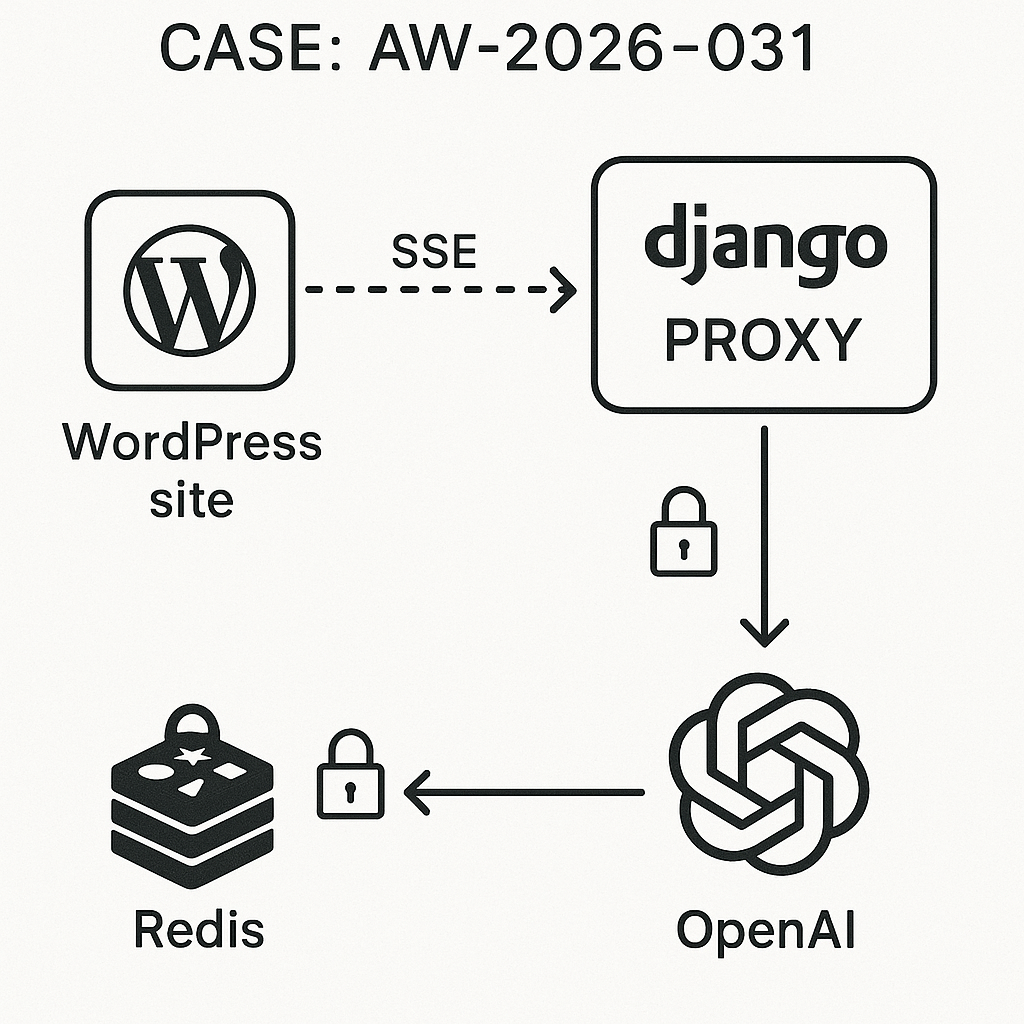

High-level architecture

– WordPress site(s) → Proxy (Django ASGI) → Provider APIs (OpenAI/Anthropic/etc.)

– Redis for rate limits, idempotency, and response cache.

– Postgres optional for audit logs.

– Cloudflare → NGINX → Uvicorn (Django ASGI).

Security model

– Per-site API key pair: site_id + site_secret (stored in WP).

– Request signature: HMAC-SHA256 over body + timestamp.

– JWT issued by proxy for short-lived sessions (optional).

– Nonce in WordPress UI, capability checks for settings.

– IP allowlist and user-agent tagging for WordPress clients.

– Enforce TLS end-to-end.

Django proxy (ASGI) essentials

– Django 5.x, Python 3.11+, Redis, httpx (async), uvicorn.

– Endpoints:

– POST /v1/chat (stream or non-stream)

– POST /v1/embeddings

– GET /v1/models (capability discovery)

– Headers:

– X-Site-ID, X-Timestamp, X-Signature, X-Client-Request-ID

Example models.py (optional audit)

from django.db import models

class InferenceLog(models.Model):

request_id = models.CharField(max_length=64, db_index=True)

site_id = models.CharField(max_length=64, db_index=True)

route = models.CharField(max_length=32)

prompt_hash = models.CharField(max_length=64, db_index=True)

provider = models.CharField(max_length=32)

tokens_in = models.IntegerField(default=0)

tokens_out = models.IntegerField(default=0)

status = models.IntegerField()

elapsed_ms = models.IntegerField()

created_at = models.DateTimeField(auto_now_add=True)

Rate limiting (Redis, sliding window)

– Key: rl:{site_id}:{route}

– Allow N requests per window, e.g., 60/min, 600/hour.

– Return 429 with Retry-After.

Pseudo-implementation (views.py, chat streaming)

import asyncio, hmac, hashlib, time, json, os

import httpx

from django.http import StreamingHttpResponse, JsonResponse

from django.views.decorators.csrf import csrf_exempt

from django.utils.crypto import constant_time_compare

import redis

r = redis.Redis.from_url(os.environ[“REDIS_URL”], decode_responses=False)

PROVIDER_KEY = os.environ[“PROVIDER_KEY”]

HMAC_SECRET = os.environ[“HMAC_SECRET”].encode()

def verify_sig(raw, ts, sig):

if abs(int(time.time()) – int(ts)) > 60:

return False

mac = hmac.new(HMAC_SECRET, raw + ts.encode(), hashlib.sha256).hexdigest()

return constant_time_compare(mac, sig)

def rl_allow(site_id, route, limit=60, window=60):

key = f”rl:{site_id}:{route}”

now = int(time.time())

pipe = r.pipeline()

pipe.zremrangebyscore(key, 0, now-window)

pipe.zadd(key, {str(now): now})

pipe.zcard(key)

pipe.expire(key, window)

_, _, count, _ = pipe.execute()

return count 3:

yield b”event: errorndata: {“message”:”upstream_failed”}nn”

break

await asyncio.sleep(backoff)

backoff *= 2

yield b”event: donendata: {}nn”

if stream:

return StreamingHttpResponse(gen(), content_type=”text/event-stream”)

else:

# Non-stream path

async with httpx.AsyncClient(timeout=60) as client:

resp = await client.post(

“https://api.openai.com/v1/chat/completions”,

headers={“Authorization”: f”Bearer {PROVIDER_KEY}”},

json={“model”: payload.get(“model”,”gpt-4o-mini”), “messages”: prompt}

)

data = resp.json()

r.setex(ck, 60, json.dumps(data))

return JsonResponse(data, status=resp.status_code)

NGINX (SSE buffering)

– proxy_buffering off;

– proxy_read_timeout 300s;

– add_header Cache-Control no-cache;

Gunicorn/Uvicorn

– uvicorn app.asgi:application –host 0.0.0.0 –port 8000 –workers 2 –loop uvloop –http h11

WordPress plugin (minimal)

– Stores Proxy Base URL, Site ID, Site Secret.

– Adds a shortcode [ai_chat] that renders a simple chat box.

– Uses SSE via EventSource to stream responses.

– Nonces for AJAX init; sanitize all options; only admins can edit.

Plugin main file (ai-proxy-chat/ai-proxy-chat.php)

‘POST’,

‘permission_callback’=>function(){ return wp_verify_nonce($_POST[‘_wpnonce’] ?? ”, ‘aig_sig’); },

‘callback’=>[$this,’sign’]

]);

});

}

public function menu() {

add_options_page(‘AI Proxy Chat’,’AI Proxy Chat’,’manage_options’,’aig-proxy-chat’,[$this,’settings’]);

}

public function register() {

register_setting(self::OPT, self::OPT, [‘sanitize_callback’=>[$this,’sanitize’]]);

add_settings_section(‘main’,’Settings’, ‘__return_false’,’aig-proxy-chat’);

add_settings_field(‘base_url’,’Proxy Base URL’,[$this,’field’],’aig-proxy-chat’,’main’,[‘k’=>’base_url’]);

add_settings_field(‘site_id’,’Site ID’,[$this,’field’],’aig-proxy-chat’,’main’,[‘k’=>’site_id’]);

add_settings_field(‘site_secret’,’Site Secret’,[$this,’field’],’aig-proxy-chat’,’main’,[‘k’=>’site_secret’]);

}

public function sanitize($v){

return [

‘base_url’=>esc_url_raw($v[‘base_url’] ?? ”),

‘site_id’=>sanitize_text_field($v[‘site_id’] ?? ”),

‘site_secret’=>sanitize_text_field($v[‘site_secret’] ?? ”)

];

}

public function field($args){

$o = get_option(self::OPT,[]);

$k = $args[‘k’];

$type = $k===’site_secret’ ? ‘password’ : ‘text’;

printf(”, $type, self::OPT, esc_attr($k), esc_attr($o[$k] ?? ”));

}

public function settings(){

echo ‘

AI Proxy Chat

‘;

settings_fields(self::OPT); do_settings_sections(‘aig-proxy-chat’); submit_button(); echo ‘

‘;

}

public function assets() {

wp_register_script(‘aig-chat’, plugins_url(‘chat.js’, __FILE__), [], ‘1.0’, true);

wp_localize_script(‘aig-chat’, ‘AIG_CHAT’, [

‘nonce’=>wp_create_nonce(‘aig_sig’),

‘sigEndpoint’=>rest_url(‘aig/v1/sig’)

]);

}

public function shortcode(){

wp_enqueue_script(‘aig-chat’);

ob_start(); ?>

get_param(‘body’) ?? ”;

$ts = time();

$sig = hash_hmac(‘sha256’, $body . $ts, $o[‘site_secret’] ?? ”);

return [‘ts’=>$ts,’sig’=>$sig,’site_id’=>$o[‘site_id’] ?? ”,’base_url’=>$o[‘base_url’] ?? ”];

}

}

new AIG_Proxy_Chat();

Client JS (ai-proxy-chat/chat.js)

(function(){

const log = (t)=>{ const el = document.getElementById(‘aig-log’); el.innerHTML += t + ‘

‘; el.scrollTop=el.scrollHeight; };

document.addEventListener(‘submit’, async (e)=>{

if(e.target.id !== ‘aig-form’) return;

e.preventDefault();

const input = document.getElementById(‘aig-input’);

const msg = input.value.trim(); if(!msg) return;

log(‘You: ‘+msg); input.value = ”;

const body = JSON.stringify({model:’gpt-4o-mini’, stream:true, messages:[{role:’user’,content:msg}]});

const sigRes = await fetch(AIG_CHAT.sigEndpoint, {method:’POST’, credentials:’same-origin’, headers:{‘Content-Type’:’application/x-www-form-urlencoded’}, body:new URLSearchParams({_wpnonce:AIG_CHAT.nonce, body})});

const {ts,sig,site_id,base_url} = await sigRes.json();

const url = base_url.replace(//+$/,”) + ‘/v1/chat’;

const es = new EventSourcePolyfill ? new EventSourcePolyfill(url, {

headers: {‘X-Site-ID’:site_id,’X-Timestamp’:String(ts),’X-Signature’:sig,’Content-Type’:’application/json’},

payload: body

}) : null;

if (es){

let acc = ”;

es.onmessage = (ev)=>{ try {

const d = JSON.parse(ev.data);

const delta = d.choices?.[0]?.delta?.content || d.choices?.[0]?.message?.content || ”;

if(delta){ acc += delta; }

if(delta) log(delta);

} catch(_){} };

es.addEventListener(‘done’, ()=>{ log(‘

‘); es.close(); });

es.addEventListener(‘error’, ()=>{ log(‘Stream error‘); es.close(); });

} else {

// Fallback: POST then append

const res = await fetch(url, {method:’POST’, headers:{‘X-Site-ID’:site_id,’X-Timestamp’:String(ts),’X-Signature’:sig,’Content-Type’:’application/json’}, body});

const data = await res.json();

const text = data.choices?.[0]?.message?.content || ‘[no content]’;

log(text); log(‘

‘);

}

}, true);

})();

Hardening checklist

– WordPress: escape output, sanitize options, restrict settings to manage_options, use nonces everywhere.

– Proxy: validate JSON schema, enforce token limits, redact PII in logs, cap request size (e.g., 256KB), timeouts + retries, 429/503 behavior.

– NGINX: limit_req by IP as outer guard; set client_max_body_size 512k.

– Redis: use ACLs and TLS; set maxmemory with allkeys-lru for cache eviction.

– Keys: rotate provider keys; per-site secrets; revoke on abuse.

– Observability: request_id header, structured JSON logs, latency and token metrics.

Performance notes

– Streaming path: TTFB ~80–150 ms via proxy; throughput limited by provider stream.

– Non-stream with cache: ~5–15 ms from Redis hit.

– Uvicorn workers scale horizontally; keep WordPress PHP-FPM unchanged.

When to extend

– Add /v1/embeddings with response cache and vector store indexing.

– Add model routing policy and quota per site.

– Add document upload pipeline (signed URLs, antivirus, OCR) before LLM.