Why this pattern

– WordPress should not do heavy webhook processing.

– Verify and accept fast at the edge; do the work in a backend worker.

– Enforce idempotency, signatures, and bounded latency.

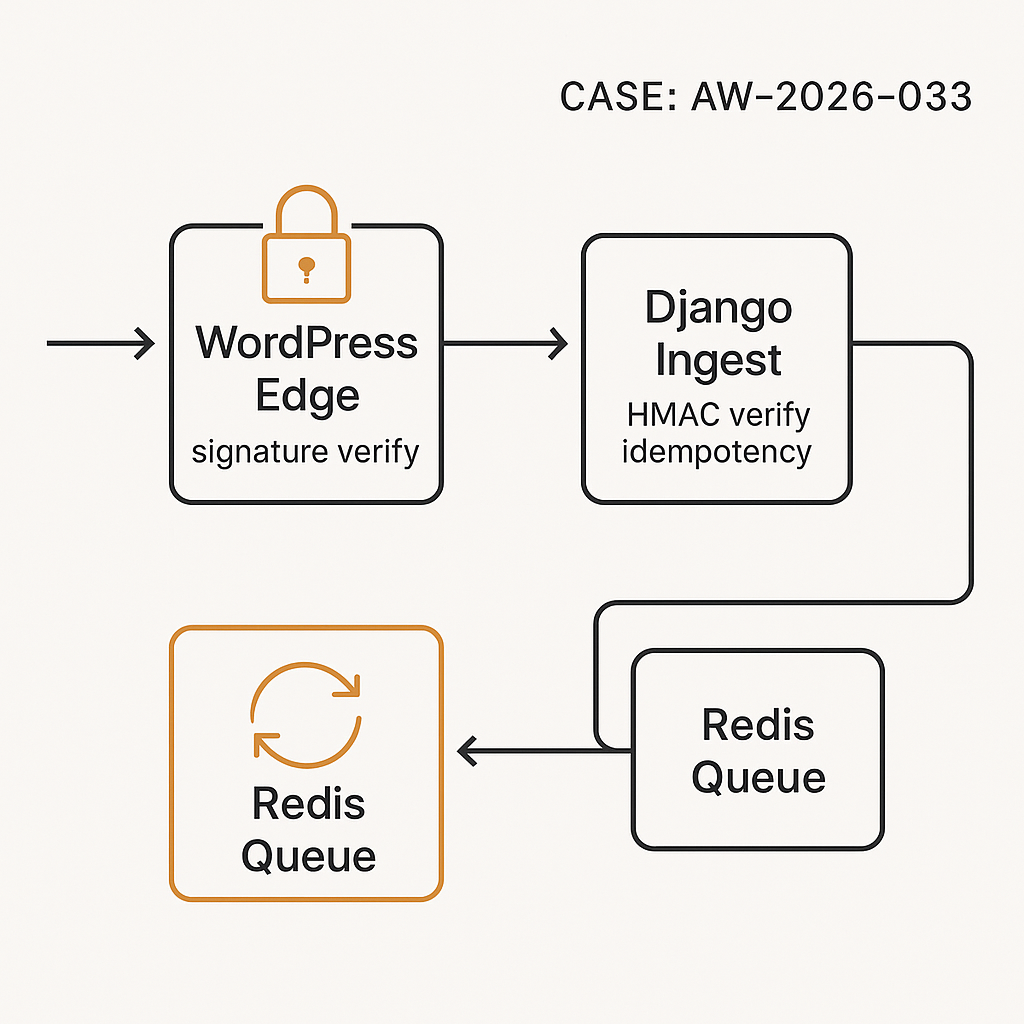

Architecture

– Providers → WordPress REST endpoint (/wp-json/ai/v1/webhook)

– WordPress validates provider signature and schema, returns 202

– WordPress relays event to Django ingest with short‑lived HMAC and an idempotency key

– Django verifies HMAC, checks idempotency, enqueues (Redis + Celery/RQ)

– Worker executes task, stores results, and emits metrics/logs

Security controls

– Provider-to-WordPress: verify provider HMAC or RSA signature using shared secret/public key

– WordPress-to-Django: HMAC-SHA256 over timestamp.nonce.body using shared secret with 1–2 minute TTL

– Strict content-type and body size limits (e.g., 256 KB)

– Only allow specific webhook IPs where possible

– Schema validation on both sides; never trust upstream fields

Idempotency

– All events carry an idempotency key (provider’s event_id or derived SHA256)

– WordPress rejects obvious duplicates (LRU cache or transient)

– Django stores first-seen key with status; subsequent ones become no-ops

WordPress: minimal plugin (REST endpoint + signature verify + relay)

– Create a small mu-plugin or plugin file.

PHP (wp-content/plugins/ai-webhook-edge/ai-webhook-edge.php):

‘POST’,

‘callback’ => ‘ai_webhook_handle’,

‘permission_callback’ => ‘__return_true’,

‘args’ => [],

‘schema’ => null

]);

});

function ai_get_raw_body() {

return file_get_contents(‘php://input’);

}

function ai_verify_provider_signature($raw, $headers, $secret) {

// Example: HMAC in header X-Signature: sha256=hex

$sig = isset($headers[‘x-signature’]) ? $headers[‘x-signature’] : (isset($headers[‘X-Signature’]) ? $headers[‘X-Signature’] : null);

if (!$sig) return false;

$calc = ‘sha256=’ . hash_hmac(‘sha256’, $raw, $secret);

// Constant-time compare

return hash_equals($calc, $sig);

}

function ai_idempotency_key($payload) {

if (isset($payload[‘id’])) return ‘prov:’ . $payload[‘id’];

return ‘hash:’ . hash(‘sha256’, json_encode($payload));

}

function ai_seen_before($key) {

// Use transients for 1 day; replace with custom table for high volume

$exists = get_transient(‘ai_webhook_’ . $key);

if ($exists) return true;

set_transient(‘ai_webhook_’ . $key, ‘1’, DAY_IN_SECONDS);

return false;

}

function ai_sign_to_django($raw, $secret) {

$ts = time();

$nonce = bin2hex(random_bytes(8));

$base = $ts . ‘.’ . $nonce . ‘.’ . $raw;

$sig = hash_hmac(‘sha256’, $base, $secret);

return [‘ts’ => $ts, ‘nonce’ => $nonce, ‘sig’ => $sig];

}

function ai_webhook_handle($request) {

$raw = ai_get_raw_body();

if (strlen($raw) > 262144) return new WP_REST_Response([‘error’ => ‘payload too large’], 413);

$provider_secret = getenv(‘PROVIDER_WEBHOOK_SECRET’) ?: defined(‘PROVIDER_WEBHOOK_SECRET’) ? PROVIDER_WEBHOOK_SECRET : null;

if (!$provider_secret) return new WP_REST_Response([‘error’ => ‘server misconfigured’], 500);

$headers = array_change_key_case($request->get_headers(), CASE_LOWER);

$flat = [];

foreach ($headers as $k => $v) { $flat[$k] = is_array($v) ? implode(‘,’, $v) : $v; }

if (!ai_verify_provider_signature($raw, $flat, $provider_secret)) {

return new WP_REST_Response([‘error’ => ‘invalid signature’], 401);

}

$json = json_decode($raw, true);

if (!is_array($json)) return new WP_REST_Response([‘error’ => ‘invalid json’], 400);

// Basic schema guard

if (!isset($json[‘type’]) || !isset($json[‘data’])) {

return new WP_REST_Response([‘error’ => ‘invalid schema’], 422);

}

$key = ai_idempotency_key($json);

if (ai_seen_before($key)) {

return new WP_REST_Response([‘status’ => ‘duplicate’], 202);

}

// Relay to Django

$django_url = getenv(‘DJANGO_INGEST_URL’) ?: ‘https://backend.example.com/ingest/webhook’;

$django_secret = getenv(‘DJANGO_SHARED_SECRET’);

if (!$django_secret) return new WP_REST_Response([‘error’ => ‘server misconfigured’], 500);

$sig = ai_sign_to_django($raw, $django_secret);

$args = [

‘timeout’ => 2,

‘redirection’ => 0,

‘headers’ => [

‘Content-Type’ => ‘application/json’,

‘X-Idempotency-Key’ => $key,

‘X-Edge-Signature’ => $sig[‘sig’],

‘X-Edge-Timestamp’ => $sig[‘ts’],

‘X-Edge-Nonce’ => $sig[‘nonce’],

],

‘body’ => $raw,

];

$resp = wp_remote_post($django_url, $args);

// Do not block on backend outcome; accept at edge regardless

return new WP_REST_Response([‘status’ => ‘accepted’], 202);

}

Django ingest: verify, idempotency, enqueue

– Use Django REST Framework (DRF), Redis, and Celery.

settings.py (relevant):

– Ensure DJANGO_SHARED_SECRET and body size limits via web server (e.g., Nginx client_max_body_size 256k)

models.py:

from django.db import models

class WebhookEvent(models.Model):

idempotency_key = models.CharField(max_length=128, unique=True)

received_at = models.DateTimeField(auto_now_add=True)

payload = models.JSONField()

status = models.CharField(max_length=16, default=’queued’) # queued, done, err

error = models.TextField(null=True, blank=True)

views.py:

import hmac, hashlib, time

from rest_framework.decorators import api_view

from rest_framework.response import Response

from rest_framework import status

from django.conf import settings

from .models import WebhookEvent

from .tasks import process_event

from django.db import IntegrityError, transaction

def verify_edge_signature(raw, ts, nonce, sig, secret):

try:

ts = int(ts)

except:

return False

if abs(time.time() – ts) > 120:

return False

base = f”{ts}.{nonce}.{raw.decode(‘utf-8’)}”

calc = hmac.new(secret.encode(), base.encode(), hashlib.sha256).hexdigest()

return hmac.compare_digest(calc, sig)

@api_view([‘POST’])

def ingest_webhook(request):

raw = request.body

ts = request.headers.get(‘X-Edge-Timestamp’)

nonce = request.headers.get(‘X-Edge-Nonce’)

sig = request.headers.get(‘X-Edge-Signature’)

idem = request.headers.get(‘X-Idempotency-Key’)

if not (ts and nonce and sig and idem):

return Response({‘error’: ‘missing headers’}, status=status.HTTP_400_BAD_REQUEST)

if not verify_edge_signature(raw, ts, nonce, sig, settings.DJANGO_SHARED_SECRET):

return Response({‘error’: ‘invalid signature’}, status=status.HTTP_401_UNAUTHORIZED)

try:

with transaction.atomic():

evt = WebhookEvent.objects.create(

idempotency_key=idem,

payload=request.data,

status=’queued’

)

except IntegrityError:

return Response({‘status’: ‘duplicate’}, status=status.HTTP_202_ACCEPTED)

process_event.delay(evt.id)

return Response({‘status’: ‘queued’}, status=status.HTTP_202_ACCEPTED)

tasks.py (Celery):

from celery import shared_task

from .models import WebhookEvent

from django.db import transaction

@shared_task(bind=True, autoretry_for=(Exception,), retry_backoff=2, retry_jitter=True, max_retries=5)

def process_event(self, event_id):

evt = WebhookEvent.objects.get(id=event_id)

try:

# Route by evt.payload[‘type’]

# Example: upsert CRM record or trigger LLM pipeline

with transaction.atomic():

# … do work …

evt.status = ‘done’

evt.error = None

evt.save(update_fields=[‘status’,’error’])

except Exception as e:

evt.status = ‘err’

evt.error = str(e)

evt.save(update_fields=[‘status’,’error’])

raise

Operational notes

– Return 202 at the edge regardless of backend outcome

– Enforce 2s timeout on edge-to-backend relay; log but don’t fail the response

– Use Nginx/Cloudflare to rate-limit by provider IP and path

– Add request/response logs with event_id, idem_key, trace_id

– Metrics: accepted, duplicates, queue depth, processing latency, failures, retry counts

– Dead-letter: if max retries exceeded, move payload to a dlq table or topic for manual replay

– Backfill/replay: build an admin view in Django to re-enqueue events

Validation tips

– Maintain per-provider signature logic and secrets

– Validate payloads with Pydantic or DRF serializers; coerce types and reject unknown fields if needed

– Strip/ignore personally identifiable data you do not need

When to use this pattern

– High-volume webhook sources (Stripe, Slack, CRM events)

– Any AI automation where processing might take >100 ms

– WordPress sites that must stay responsive while backend workers scale independently

Performance checklist

– 202 at edge in <50 ms; O(1) work only

– HMAC verification uses streaming raw body

– Duplicate suppression at both layers

– Async processing with bounded retries and jitter

– Observability wired before launch

This is a great pattern for keeping the public-facing CMS responsive while ensuring webhook integrity. What is your strategy for handling retries or failed jobs after they’ve been enqueued by the Django worker?

Good question—how are you handling retries/backoff once the job is in the queue (e.g., exponential with jitter, max attempts, and a visibility timeout/ack strategy)? Do you route hard failures to a dead-letter queue/store for later inspection/replay, and if so what’s your retention policy? Also curious whether your idempotency key scheme is designed to stay stable across provider retries *and* internal replays from the worker, so a “retry storm” can’t cause duplicate side effects.

We handle those cases with an exponential backoff strategy and a stable idempotency key, routing persistent failures to a dead-letter queue for manual review.

Nice — that covers the core failure modes well. For the exponential backoff, what are you using for max attempts and do you add jitter to avoid synchronized retry spikes? On the DLQ side, how long do you retain events, and what does your replay flow look like (e.g., one-click requeue vs. a controlled batch with throttling)? Also, do you have alerting/observability around “DLQ growth,” retry rate, and age-of-oldest message so you catch issues before the queue backs up?

Great questions—we use full jitter on retries, a throttled batch replay from the DLQ, and have alerts on all those key metrics.

Thanks for clarifying — full jitter + throttled replay + alerts is a solid combo. Out of curiosity, what concrete bounds are you using in production (e.g., max attempts and the total retry window before DLQ), and who “owns” DLQ retention (time-based purge vs. manual cleanup)? Also, how long do you keep DLQ payloads/metadata around to support safe replays and audits? If you’re willing, a tiny example config (Celery/RQ retry settings + DLQ retention) would be really helpful to make it tangible.

Our retry windows and retention periods are service-specific, generally aligned with our internal SLAs and the time required for a human to safely intervene.

That makes sense—tying it to human intervention time and SLA impact is usually the right anchor. When you translate those SLAs into defaults, what’s the rule of thumb you use to pick a retry window/attempt budget vs. when something should move to DLQ and sit for operator action? If you’re up for it, a high-level example for one “high urgency” vs. one “low urgency” webhook (just the shape of the policy, not exact numbers) would help make the mapping concrete.

High-urgency webhooks get a tight retry window to fail fast into the DLQ for an operator, whereas low-urgency ones have a much longer, more patient retry schedule to resolve on their own.