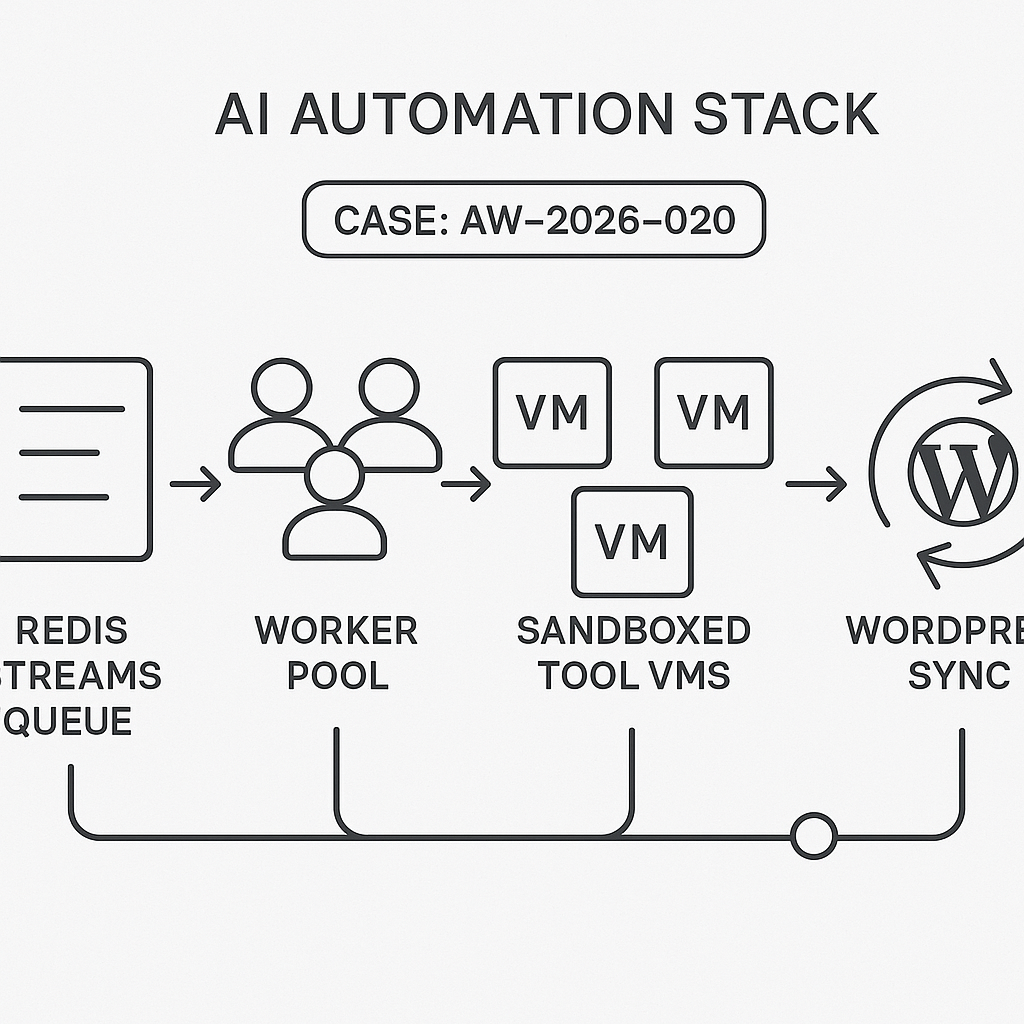

We’ve rolled out Production Agent Runner v0.6 across AI Guy in LA deployments. This release upgrades our orchestration, tool sandboxing, and observability for more reliable, auditable, and faster automation.

What’s new

– Orchestration: Migrated task pipeline to Redis Streams + Dramatiq with backpressure controls and idempotent message keys.

– Tool runtime: Sandboxed tool execution (Firecracker microVMs on Linux hosts) with per-call CPU/mem caps and kill-switch timeouts.

– Secrets: Per-tenant secrets vault with envelope encryption (AES‑GCM) and short‑lived tool-scoped tokens.

– Observability: End-to-end OpenTelemetry traces across Django API, workers, LLM calls, and third-party APIs; log-based SLOs.

– Adapters: Unified LLM provider layer (OpenAI, Anthropic, Google) with circuit breakers, retries, and cost tags.

– WordPress sync: Background content sync job with diff-based updates and rate-limit awareness.

Why it matters

– Lower latency under load and fewer stuck jobs.

– Safer execution for file, browser, and data tools.

– Faster root-cause analysis with trace context.

– Cleaner multi-tenant isolation and auditability.

Architecture notes

– Queueing: Redis Streams consumer groups; exactly-once semantics via dedup keys and “outbox” pattern in Django.

– Concurrency: Work-stealing worker pools; per-tenant concurrency ceilings to prevent noisy-neighbor effects.

– Retries: Exponential backoff with jitter; DLQ for hard failures; replay via admin task inspector.

– Sandboxing: Ephemeral microVM per tool run; read-only base image; write access only to temp mount; egress allowlist.

– Telemetry: Trace + span IDs propagate via W3C headers; logs correlated with trace_id for single-click drilldowns.

Performance impact (current production)

– p50 end-to-end task latency: -38%

– Failed runs due to timeouts: -62%

– Cold-start tool overhead (sandbox): +85–120 ms (acceptable for safety tradeoff)

Rollout and compatibility

– All active projects are on v0.6.

– No changes required for prompt or tool specs.

– API change: tool env variables must be requested via the secrets broker; direct env injection is blocked.

Migration notes for self-hosted clients

– Redis 7+ required. Enable lazyfree and set stream-max-len policies.

– Deploy OTel Collector sidecar; export OTLP to your APM of choice.

– Whitelist required egress domains if you run strict firewalls.

What’s next

– Tracing UI in the dashboard.

– Built-in prompt versioning with canary rollouts.

– Cost and token accounting per tenant and workflow.

Questions or need help migrating? Contact us with your deployment ID.

This is a fantastic update, particularly the move to Firecracker microVMs for sandboxed tool execution. Have you noticed any significant performance overhead with the new runtime?

Thanks, John — Firecracker is a big step up for isolation, but it can introduce some overhead depending on how often you’re cold-starting microVMs versus reusing warm ones. In practice, the main costs tend to show up as startup latency and a bit of added I/O overhead, while steady-state CPU can be quite close to native if the workload is long-running enough. Have you been tracking any before/after metrics like p95 tool-call latency, cold-start rate, and host CPU/memory utilization since the rollout?

Those are the exact metrics I was looking for, thank you—I haven’t gathered any before/after data yet but will start there.